I’m working my way up to designing a 4x4 element phased array antenna at 5.8GHz. The idea is that two of them will be able to communicate with each other while one of them is moving, which means that they both need to be able to beamsteer. Just to get a better idea of how beamsteering works, I simulated a few simple1 beamforming methods..

Phased Array background

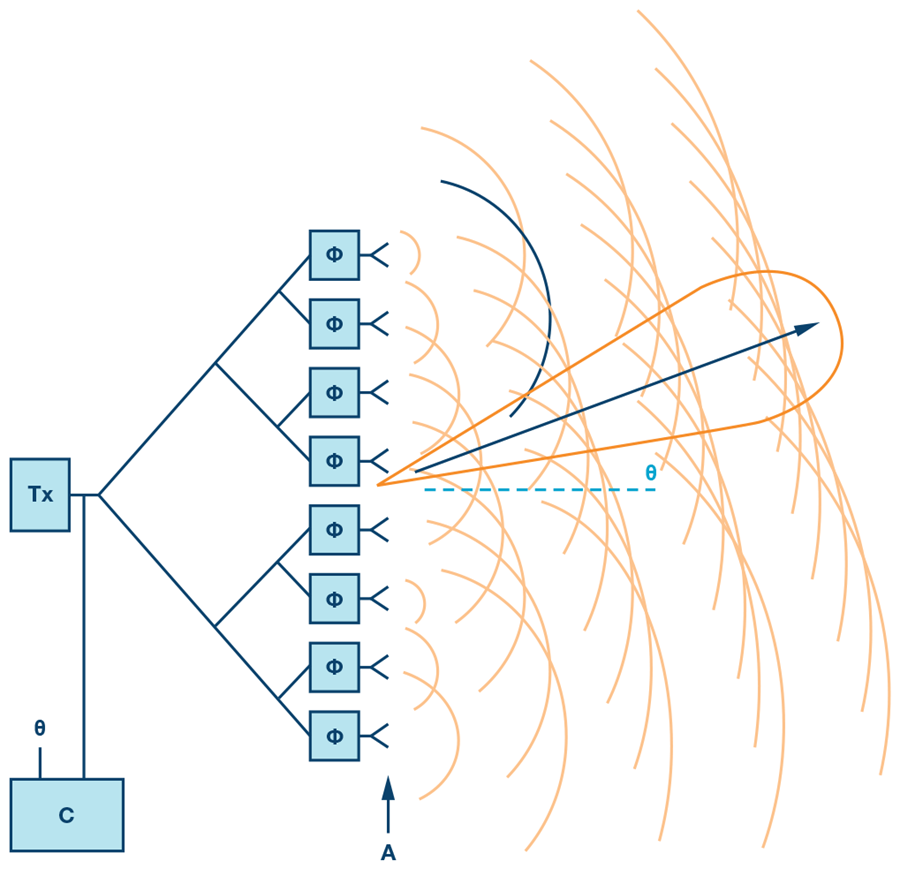

The basic idea of a phased array is pretty straightforward - it’s an array of antennas, where each has a different, electrically-controlled phase to allow for dynamic movement of the total output radiation. The most basic form of a phased array is a uniform (equally spaced and identical elements) linear (in a line) array. Then if we assume that each antenna element radiates in a nice circular shape and we phase shift teh radiation by a bit for each element in the beam, they’ll form a uniform, flat wavefront that in steered in a direction:

Now let’s put some math to this - in this post I’m going to stick to these uniform linear arrays located on the $\hat{x}$ axis and $\phi$ will be the angle off that axis (the azimuthal, by convention). To keep things simple, I’m also going to assume that each element is a hertzian dipole. Then the total radiation in the far field (near-field radiation either dies out or is stored close to the source) is given by

\[\begin{align} \overline{E}_{ff}=\sum_{i=0}^{N-1}\widehat{\theta} \frac{j\eta_{0}kI_{i}d_{i}}{4\pi r_{i}}e^{-jkr_{i}}\sin(\theta_{i}) \end{align}\]When the target is sufficiently far away from the antenna, the Fraunhofer farfield approximation becomes valid:

\[\begin{align} r_{i}\approx r- \widehat{r} \cdot \overrightarrow{a}_{i} \end{align}\]And then you can separate the far field into two components - the coefficient out front that captures the radiation of a single element, known as the element factor, and the sum that captures the geometry of the array, known as the array factor:

\[\begin{align} \overline{E}_{ff}=\widehat{\theta} \frac{j\eta_{0}kI_{i}d_{i}}{4\pi r_{i}}e^{-jkr}\sin(\theta)\sum_{i=0}^{N-1}w_{i}e^{jk\widehat{r}\overrightarrow{a}_{i}} \end{align}\]The array factor is the important part since it captures the phase between array elements, which is a function of both the commanded phase ($w_i$) and the phase due to geometry and the target angle (the exponential term). $\hat{r}$ is the unit vector in the direction of the target, and $\vec{a}$ is the vector pointing from the center element of the array (or any reference element) to the others.

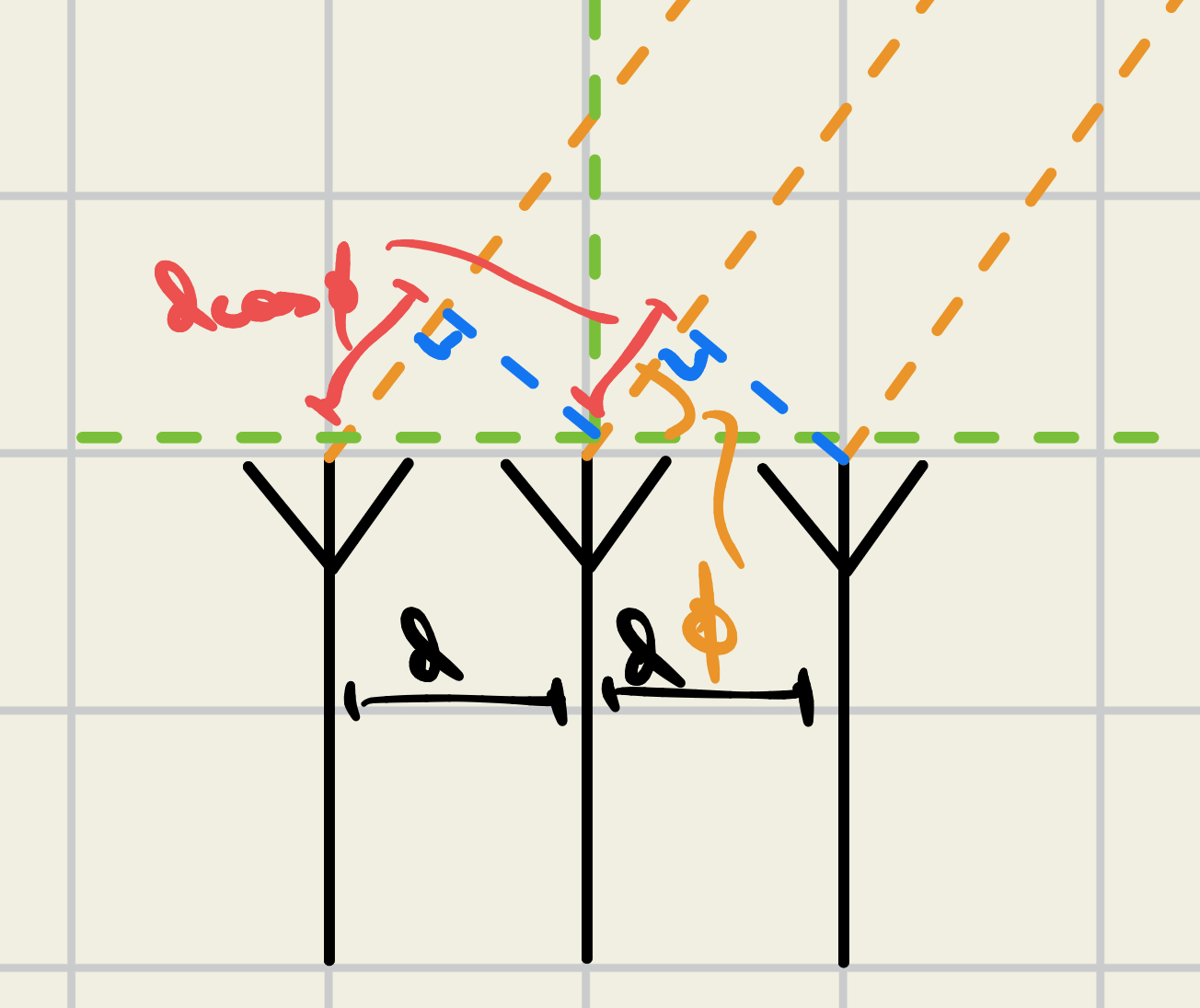

If we stay in the xy plane ($\theta = \frac{\pi}{2}$), then this is simply a cosine term

\[\begin{align} \widehat{r}\cdot \overrightarrow{a}_i = id\cos(\phi) \end{align}\]… which will exist in the summation. $i$ accounts for increased phase as you traverse across the array. To beamsteer (radiate in different directions) all you need to do is change $\phi$. This animation shows beamsteering from $\phi =0$ to $\phi = \pi$ radians by the two elements located on the red circles.

One quick thing to point out: beamforming for receive and transmit is identical. Steering an array to radiate in direction $\phi$ requires the same weights that the array would need to receive at angle $\phi$.

Adaptive Beamforming

For my application, I’m both interested in transmitting and receiving from unknown directions since both phased arrays will communicate back and forth, they both need to know where the other is. This is where adaptive beamforming comes in - constantly updating the angle being steered at to maximize signal power from the direction of interest. The methods I’m going to go over are conventional, bartlett, and MVDR beamforming. These are pretty efficient and accurate for no more than a few beams (including sidelobes). The two other algorithms used are ESPRIT and MUSIC, which are both cool but are probably too compute intensive for me to implement on my own array. Not focusing on them now.

Conventional beamforming

Very simple - performs a shift on all incoming signals for a given angle of attack $\theta$, then adds them up to get an idea of how correlated they are (i.e. computes cross correlation).

Define the steering vector

\[\begin{align} \overrightarrow{s}_{N}^T=[e^{-jkd\cos(\phi)} \quad e^{-2jkd\cos(\phi)}\dots \quad e^{-Njkd\cos(\phi)}] \end{align}\]to describe the phase lag perceived by each element in the linear array based on the angle of the incoming signal from the azimuthal $\phi$. We model the set of incoming signals as:

\[Y_{N\times T}=\overrightarrow{s}(\phi) \cdot X_{N\times T}+W_{N \times T}\]Where $\overrightarrow{x}$ describes the signal received over some $T$ samples across $N$ elements, and $\overrightarrow{\omega}$ is gaussian distributed random noise with variance $\sigma^2$, also $T$ samples long. Assuming that $ \lvert \omega_{i} \rvert \in \overrightarrow{\omega} $ is small relative to the input signal (the SNR is high), we just shift $\overrightarrow{y}$ by a “test” angle $\theta_{t}$ using the hermitian (conjugate transpose) of $\overrightarrow{s}$:

\[p(\theta_{t})=\frac{1}{N}Y\cdot\overrightarrow{s}^*\]Where the conjugate is useful because it flips the phase lag. Then you end up with a single signal representing the normalized ‘average’ of the $N$ incident signals. All you need to do is compute the variance of each as you sweep over $\theta$, and select the one with highest variance (highest since the signals will add constructively when they are aligned properly).

Not robust because it requires high SNR.

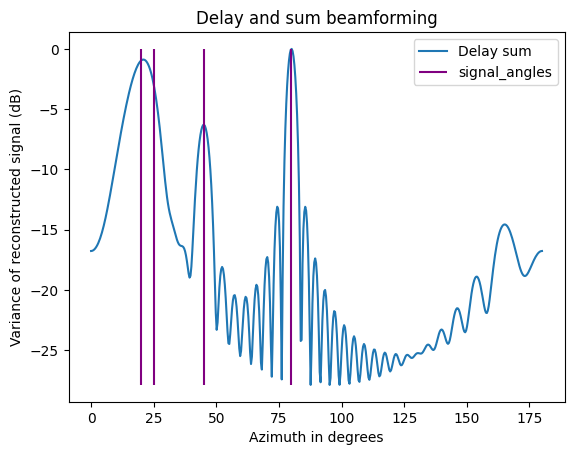

Here’s a plot of the variance across angles test steering angles. Purple lines indicate where incoming signals actually are - they’re located at 20, 25, 45, and 80 degrees.

Bartlett Beamforming

Though for some reason it tends to be described as different, it’s actually mathematically equivalent to conventional beamforming. In fact if you wanted to implement it, you should probably implement conventional since you don’t need to compute the whole autocovariance / autocorrelation matrix ($R_{yy$}$)

We start with an input signal $Y$, created by an ideal input $x$ and some additive noise $w$: \(Y_{T\times N}=\vec{x}_{T}\cdot\vec{s}(\theta)^T+\vec{\omega}_{T}\) This is from above, going to write it a little more cleanly so that time samples are aligned along columns: \(Y_{N\times T}=\vec{s}(\theta)\vec{x}_{T}^T+\vec{\omega}_{T}^T\)

The ideal beamformer would yield an ideal output signal: \(p(\theta_{t})=\frac{1}{N}\vec{s}^H\cdot Y\) For simplicity, dropping the $\frac{1}{N}$ factor since it’s ugly. Now if we decide to compute the variance, we have: \(\begin{align} P&=\mathbf{E}[(\vec{s}^H\cdot Y)^2] \\ &=\mathbf{E}[(\vec{s}^H\cdot Y)(Y^H\vec{s})] \\ &=\vec{s}^H\mathbf{E}[YY^H]\vec{s} \\ &=\vec{s}^HR_{YY}\vec{s} \end{align}\) Then you just compute this across an angular sweep, and record the angle with the highest power as the angle of attack. Most of the time, the variance is referred to as power (you’re maximizing power) because of the [relationship between variance and power](https://dsp.stackexchange.com/questions/72386/signal-variance-and-power-connection.

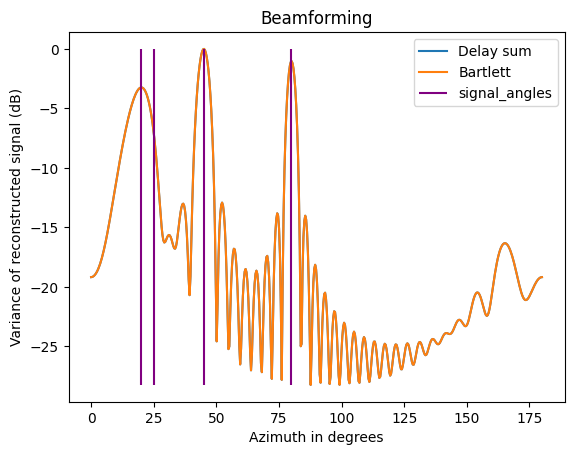

To show that conventional and bartlett beamforming are equivalent, here they are plotted against each other for the same angles as before (ran it again, so the noise + plots will be a little different):

Notice how neither the bartlett nor the conventional are very selective, and neither can distinguish between 20-25 degrees. The tradeoff for computational efficiency (relative to othermethods) is poor selectivity.

Capon (MVDR) beamformer

Notes from here

Assume $a(\theta)\in C^n$ is the response of the array to a plane wave of unit amplitude from direction $\theta$. The source signal is $s(t)$, so the array output can be given by: \(y(t) = a(\theta)s(t)^T+v(t)\) Where $v$ is AWGN. Choose $w$ to be the vector of weights (phase shifts) applied by the beamformer to recover the original signal: \(\begin{align} y_{c}(k)&=w^*y(k) \\ &=w^*a(\theta)s(k)+w^*v(k) \end{align}\) Of course, if $w^*a(\theta)=1$ and the noise is small, $y_{c}\approx y$ and we recover the original signal. From the barlett beamforming method, we know that the variance (power) of the weighted received signal is: \(P=w^*R_{YY}w\) But of course, we can also look at the noise power (derived identically, just ignored by the bartlett): \(P=w^*R_{v}w\)

Where $R_{v}$ is the autocovariance matrix of the noise. If we know $a(\theta)$ and $R_{v}$, we solve for $w$ such that: \(\begin{aligned} \min_{w} \quad & w^{*} R_v w \\ \text{subject to} \quad & w^{*} a(\theta_d) = 1 \end{aligned}\) The thing is, instead of using $R_{v}$ directly (we usually don’t know it) we can instead just use the autocovariance matrix of the signal: \(\begin{aligned} \min_{w} \quad & w^{*} R_y w \\ \text{subject to} \quad & w^{*} a(\theta_d) = 1 \end{aligned}\) Since $R_{y}$ actually captures the noise. This is just the bartlett equation now, except that the constraint forces the optimizer to choose $w$ that is ideally steered in $\theta$ (inverse of the geometrically-derived phase shift) with appropriate scalings (accounting for sidelobes or multiple sources) to have minimum power. $\theta_{d}$ (test angle) is steered in the right direction, $w$ ends up maximizing the output power of $R_{y}$.

The closed from solution is given by \(w_{mv}= \frac{R_{y}^{-1}a(\theta)}{a(\theta)^HR_{y}^{-1}a(\theta)}\) We can capture the effectiveness of a beamformer by its signal-to-interference-plus-noise ratio (SINR): \(\text{SINR}=\frac{\sigma_{d}^2|w^Ha(\theta)|^2}{w^HR_{v}w}\) Note that when dealing with AWGN and a uniform input signal (so SNR is equivalent on each element, and the scalar weight on each element should be uniform) the capon is equivalent to the Bartlett; each optimization step ($\theta_{d}$ test) we just compute $w^HR_{y}w$. This makes the capon useful when there are multiple signals or the array geometry produces sidelobes, since it will compute the optimal weights $\vec{w}$ to null signals incident from the wrong direction.

The medium article does a good job comparing the bartlett and capon in the presence of multiple sources.

Coherence of input signals

MVDR assumes that the input signals are incoherent, and performs considerably worse when that assumption is false. Let’s look at what the ‘signal’ matrix $y$ (switching notation to $Y$) would look like: \(\begin{align} Y_{N\times T} = \begin{pmatrix} y_{11} & y_{12} & y_{13} & \dots \text{T samples} \\ y_{21} & y_{22} & \dots \\ \dots \\ \text{N elements} \end{pmatrix}=\begin{pmatrix} \vec{y}_{1} \\ \vec{y}_{2} \\ \dots \\ \vec{y}_{N} \end{pmatrix} \end{align}\)

If you assume that the signals are phase coherent, then the phases are approximately equal $\angle\vec{y}{1}\approx \angle\vec{y}{2}\approx\dots$ for each element. When you take the outer product to compute $R_{YY}=\mathbf{E}[YY^H]$, you’ll end up with a fairly uniform matrix (all the elements approximately equal), which means that $R_{YY}$ will be roughly uniform as well. The result is that the optimization step \(\begin{align} \min_{w} \quad & w^{*} R_{YY} w \\ \end{align}\) cannot easily select different directions via $w$, and will output a relatively flat spectrum as $\theta$ is varied.

The easy way to check if this is the problem with $R_{YY}$ is to check the condition number $\kappa$, since this tells you how close the matrix is to singular (uniform).

\[\begin{align} \bar{E}_{ff}=\hat{\theta} \frac{j\eta_{0}kI_{i}d_{i}}{4\pi r_{i}}e^{-jkr}\sin(\theta)\sum_{i=0}^{N-1}w_{i}e^{jk\hat{r}a_{i}} \end{align}\](Cover image courtsey of Analog Devices)

-

The fun part about this post is that I get to use big words, despite barely grazing the surface of their actual complexity :) ↩